Contents

What Is Marketing Attribution And Why 90% of Marketers Get It Wrong

- By Tamalika Sarkar

- Published:

The reason most attribution work fails is not technical. It is organizational. Companies build attribution models that answer the wrong question, then defend the answers because changing them would require difficult conversations about budget, headcount, and channel ownership.

That is the actual problem. Not the model selection. Not the tooling. The willingness to let attribution tell you something inconvenient.

Getting attribution right is not about finding the perfect model — there isn’t one. It is about building a measurement system honest enough to challenge your priors and structured enough to drive actual budget decisions. Most companies are not doing that. And the ones that are doing it well have usually been burned badly enough by doing it wrong that they had no choice but to change.

This guide breaks down where attribution fails. It explains what common models really tell you, and what they hide. You’ll also learn how to build a measurement framework that ties marketing activity to real business outcomes, not internal politics.

What Attribution Actually Is and What It Is Not

Attribution is the practice of assigning credit for a conversion across the marketing touchpoints that influenced it. A customer sees a LinkedIn ad, reads a blog post a week later, opens a retargeting email, and finally converts through a branded search. Which channel gets credit? How much? In what proportion?

Attribution attempts to answer that question systematically, rather than arbitrarily.

What attribution is not: a definitive truth about causation. Every attribution model is a simplification of reality.

The actual causal chain leading to a customer purchase is partly unmeasurable,

- influenced by conversations you did not track,

- competitive experiences you did not see, and

- timing factors your model cannot account for.

The goal is not perfection. The goal is a consistent, defensible framework that surfaces better insights than gut instinct and produces better budget decisions than last-click reporting.

This distinction matters because attribution is often sold internally as a precision science, which creates unrealistic expectations and ultimately leads to cynicism. Teams invest in sophisticated tooling, find that the outputs still require significant judgment to interpret, and conclude the whole exercise was not worth it. The framing was wrong to begin with.

The right framing: attribution is structured evidence that informs decisions, not proof that determines them.

Why the Customer Journey Makes Attribution Hard

A decade ago, the average B2C purchase involved two or three touchpoints.

- A search,

- a click,

- a transaction.

Attribution was relatively tractable.

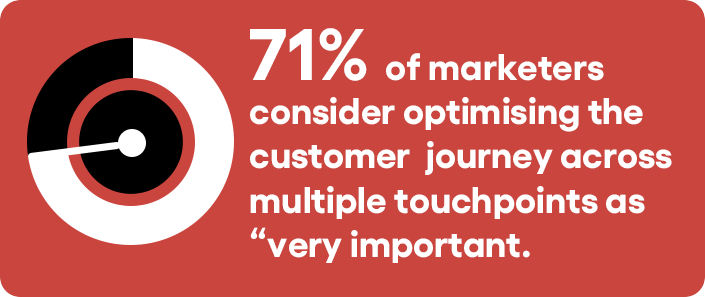

That environment no longer exists. The modern purchase journey, particularly in B2B and in considered consumer categories, involves

- multiple channels,

- multiple devices,

- extended time horizons, and

- touchpoints that span both digital and offline environments.

A customer might first encounter your brand through organic search, engage with your content over several weeks, see a paid social ad that reactivates their interest, get a referral from a colleague, and convert after a sales call.

How many of those touchpoints does your attribution model capture? Probably not all of them.

The colleague referral is invisible to your tracking. The offline touchpoints require additional instrumentation. The multi-device journey creates identity resolution challenges that most companies have not fully solved.

This is not a reason to give up on attribution.

It is a reason to hold any single attribution model with appropriate humility and to supplement quantitative attribution with qualitative research (customer surveys, post-purchase interviews, and win/loss analysis) that captures the touchpoints your tracking misses.

The companies that get attribution right tend to run both in parallel. The quantitative model directs the budget. The qualitative research challenges and refines it.

The Core Attribution Models: What Each One Is Actually Measuring

Understanding the mechanics of each model matters, but what matters more is understanding what each model incentivizes organizationally, because the model you use shapes which channels your team argues for and which ones get cut.

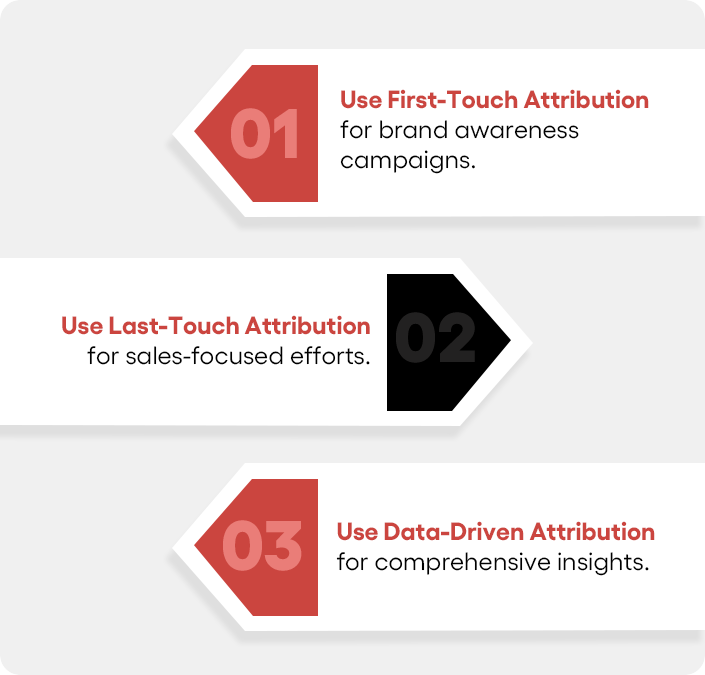

First-Touch Attribution

First-touch assigns 100% of conversion credit to the first trackable interaction. In practice, this model amplifies the apparent value of awareness-building channels:

- paid social,

- display,

- organic content, and

- PR.

What it tells you: Which channels are best at generating new audience exposure.

This is genuinely useful if your primary strategic question is about brand-building efficiency or which acquisition channels introduce you to the right audiences.

What it hides: Everything that happened between initial exposure and conversion.

A customer who first encountered you through a LinkedIn post and then spent three months being nurtured by email and retargeting before converting, first-touch credits LinkedIn and ignores everything else. That is a significant distortion of the actual economics.

Common organizational consequence:

Over-investment in top-of-funnel channels, under-investment in mid-funnel nurture programs.

Last-Touch Attribution

Last-touch assigns 100% of credit to the final touchpoint before conversion. It is still the default in many analytics setups and the model most readily available in basic reporting tools.

What it tells you: Which channels are present at the moment of conversion.

Branded paid search and direct traffic tend to perform exceptionally well under this model, because customers who are ready to convert often search for the brand name or navigate directly before completing the transaction.

What it hides: All the earlier touchpoints that created the purchase intent.

A customer who saw five pieces of your content, engaged with three emails, and then converted through a branded search — last-touch credits the branded search and ignores the content entirely. This systematically undervalues SEO, content marketing, and any channel whose primary function is building intent rather than capturing it.

Common organizational consequence:

Over-investment in bottom-of-funnel capture channels, under-investment in the content and programs that build demand in the first place.

Ironically, this can hollow out the pipeline over time — the demand generation that feeds branded search is defunded because the model cannot see it.

This is the most dangerous model to operate as a default, and it is the most common.

Linear Attribution

Linear attribution distributes credit equally across all tracked touchpoints. Five interactions means each receives 20% of the credit.

What it tells you: A broader view of channel participation across the journey. It forces recognition that multiple touchpoints contribute and prevents any single channel from monopolizing credit.

What it hides: The degree to which different touchpoints actually influence conversion. Equal distribution is almost certainly wrong; some interactions matter more than others, but it is often less wrong than either a single-touch model for businesses with complex journeys.

Linear attribution is a reasonable starting point for teams moving away from last-touch who do not yet have the data volume to support a more sophisticated model.

It is not a destination.

Time Decay Attribution

Time decay applies increasing credit to touchpoints that occurred closer to the conversion, on the assumption that recency correlates with influence.

This is a defensible assumption in some contexts. For businesses with short consideration cycles — transactional e-commerce, for example — the interactions in the final 48 hours before purchase often do reflect the decisive influences. Time-decay captures that.

In B2B or in considered consumer purchases with long evaluation cycles, however, time-decay systematically undervalues early-stage touchpoints that were actually instrumental in building purchase intent. A whitepaper a prospect reads three months before signing a contract may have been the most important touchpoint in the journey. Time decay gives it the least credit.

The practical test:

If your average sales cycle is under 30 days, time decay is probably reasonable. If your cycle is longer — particularly in B2B where multi-stakeholder evaluation is the norm — approach it with caution.

Data-Driven Attribution

Data-driven attribution uses machine learning to analyze historical conversion patterns and assigns credit based on each touchpoint’s statistical contribution to conversions. Rather than applying a fixed rule, the model infers contribution from the data itself.

The appeal is obvious: Let the data determine the weights rather than imposing a rule that may not reflect your actual customer journey. In principle, this is the most accurate available approach.

The practical constraints are real. Data-driven attribution requires sufficient conversion volume to produce statistically stable outputs. Google’s implementation requires a minimum of several hundred conversions per month, and results become more reliable at higher volumes.

For businesses with lower conversion volume, the model is either unavailable or unreliable.

It also requires trust in the underlying algorithm and comfort with outputs that can be difficult to explain to stakeholders. When a finance team asks why you’re increasing the budget for a channel that appears to be underperforming in simpler reports, “the machine learning model says so” is not a satisfying answer. You need to build the internal fluency to translate what the model is telling you.

For businesses with sufficient data volume, data-driven attribution is worth the investment in both tooling and organizational capability.

The gap in decision quality relative to simpler models is meaningful.

Why Most Marketers Get Attribution Wrong: The Real Reasons

The mechanics of attribution failure are frequently discussed. The organizational dynamics that cause it are less often acknowledged. Both matter.

The model default problem.

Most teams inherit their attribution setup rather than choosing it. Last-touch is the default in Google Analytics and in many CRM systems.

Without a deliberate decision to change it, organizations end up running last-touch by accident, which means their budget decisions are systematically biased toward bottom-of-funnel capture channels. Often, this happens without anyone in the room recognizing that it is happening.

Channel ownership creates attribution politics.

Every attribution model produces winners and losers within a marketing organization.

- The team that owns paid social media wants a model that credits upper-funnel exposure.

- The team that owns paid search wants a model that credits the final click.

When model selection is influenced by who has the most political capital in the room rather than what most accurately reflects the customer journey, the output is designed to justify existing budgets rather than challenge them.

This is a governance problem, not a measurement problem. The solution involves both better tooling and clearer organizational agreements about how attribution evidence will be used in budget discussions.

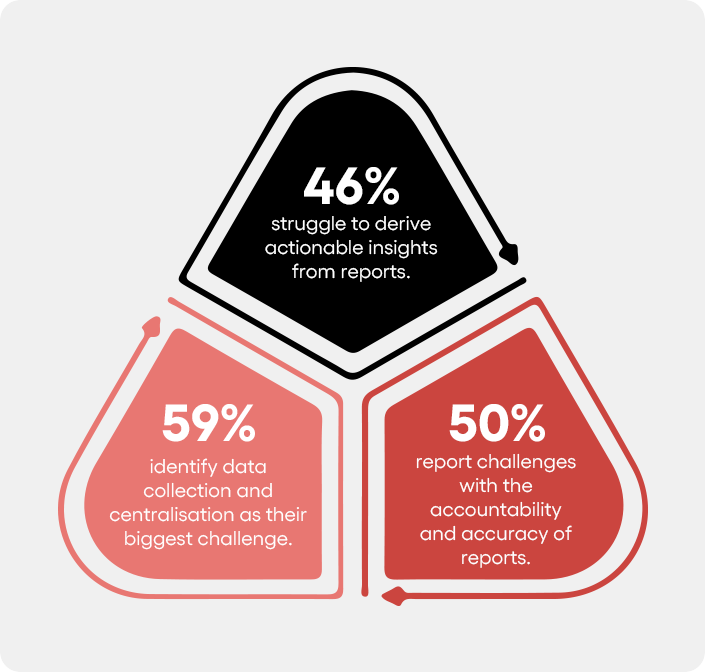

Data fragmentation is worse than most teams acknowledge.

Attribution models can only assign credit to touchpoints that are tracked. Offline interactions, partner referrals, word-of-mouth, and cross-device journeys create gaps in the data that models fill with assumptions — usually by defaulting to the last tracked touchpoint.

The practical effect is that channels generating significant influence, but limited trackable events, are systematically undercredited.

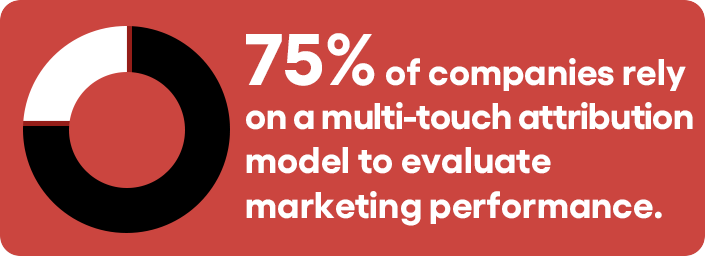

Integrating data across platforms — paid media, CRM, email, organic analytics — into a unified view is the prerequisite for any multi-touch attribution model. This is technically achievable with tools like GA4, HubSpot, or dedicated attribution platforms, but it requires instrumentation investment that many teams have not made.

Misreading the output is more common than misbuilding the model.

A well-configured data-driven model can still produce poor decisions if the team reading it does not understand what the model is measuring.

Attribution models measure correlation in historical data, not causation. A touchpoint that appears frequently in conversion paths may be a symptom of purchase intent rather than a cause of it — branded search being the clearest example.

Incrementality testing is the only way to distinguish between correlation and causal contribution, and most teams are not running it.

Building an Attribution Framework That Actually Works

The goal is not to find the objectively correct attribution model. It is to build a measurement infrastructure that consistently informs better decisions than you would make without it.

That means starting with the questions that actually matter to your business.

Define the business questions that attribution needs to answer.

Most attribution discussions focus on budget allocation across channels. But that is only part of the picture.

You also need to know

- Which touchpoints matter at each stage of the funnel?

- Which channels attract the highest-value customers?

- And what happens when you shift budget between channels?

Each of these questions requires a different analytical approach.

Match model to business context.

Short sales cycles need simpler attribution. If you’re optimizing for first purchase, time decay may be enough. But complex sales journeys require more. B2B companies with long buying cycles should use multi-touch attribution. Preferably data-driven.

CRM data should support this by capturing offline interactions. Use the wrong model, and your attribution will be misleading.

Run comparison analysis before committing.

Most attribution platforms allow you to view the same conversion data under multiple models simultaneously. Before changing your primary attribution model, run this comparison for 60 to 90 days.

Look for the channels that change most significantly between models. Those are the ones where your current model is most distorted. That analysis alone often reveals where the budget is most misallocated.

Build a holdout testing program.

Attribution models show which channels appear in conversion journeys. They don’t tell you which ones actually caused the conversion. That’s where incrementality testing comes in. It measures the conversions that would not have happened otherwise.

The difference between these two views can be huge. Holdout tests are the clearest method. Remove a channel for a control group. Then compare conversion rates.

Start with your biggest spend channels. That’s where the stakes are highest.

Integrate qualitative research.

Attribution models cannot see everything. Customers can fill in the gaps.

- Use post-conversion surveys.

- Conduct post-purchase interviews.

- Review win/loss feedback on major deals.

These sources uncover influences analytics tools miss.

Start with two simple questions:

- “How did you first hear about us?”

- “What influenced your decision to purchase?”

The answers often contradict the model.

That’s a good thing. Run both approaches. When they disagree, pay attention. That’s where the most useful insights usually live.

Establish governance before you need it.

Define the rules before the budget conversation starts.

- How much model confidence is needed before reallocating spend?

- Who interprets conflicting signals?

- How should teams present attribution data?

Without these guardrails, attribution becomes a debate. Budget decisions get driven by the best argument, not the best evidence.

The Attribution Decisions Worth Making in the Next 90 Days

Most organizations can meaningfully improve their attribution framework without a major technology investment.

The highest-leverage near-term actions:

Audit your current default model and be honest about what it is incentivizing.

If you are running last-touch by default, identify which channels are most likely being under-credited as a result. That is where you should look first for reallocation opportunities.

Assess your data integration.

Are all major channels — paid media, email, organic, CRM, offline — contributing data to your attribution model? Identify the largest gaps and prioritize closing the ones most likely to be hiding significant influence.

Run at least one holdout test this quarter.

Ideally, this should be done on a channel where attribution model credit and anecdotal evidence feel misaligned. The investment is modest, and the potential learning is high.

Build internal fluency around what your attribution model is and is not measuring.

The people making budget decisions based on attribution outputs need to understand the assumptions embedded in those outputs. That understanding changes how evidence gets interpreted and how confidently budget shifts get made.

Attribution done well is one of the more durable competitive advantages in marketing. When you are making better budget decisions than your competitors because you have better evidence about what is actually driving conversions, that advantage compounds over time. The gap between companies that measure attribution carefully and those that default to last-click is real, and it grows.

Ready to Test Your Attribution Setup?

If you want to pressure-test your current attribution setup against how it’s actually influencing budget decisions, and whether those decisions are serving the business, that review is worth doing before the next planning cycle.

CEO of Nico Digital and founder of Digital Polo, Aditya Kathotia is a trailblazer in digital marketing.

He’s powered 500+ brands through transformative strategies, enabling clients worldwide to grow revenue exponentially.

Aditya’s work has been featured on Entrepreneur, Hubspot, Business.com, Clutch, and more. Join Aditya Kathotia’s orbit on Twitter or LinkedIn to gain exclusive access to his treasure trove of niche-specific marketing secrets and insights.

Categories

Steal Our SEO Playbooks

We share what’s working behind the scenes—before everyone else catches on.

• No spam • Real strategies • Early access