Contents

Google Ends num=100: What Broke and How to Fix Your Data Stack

- By Tamalika Sarkar

- Published:

Google’s removal of the num=100 parameter in September 2025 did not just break a technical workaround. It broke a measurement methodology that the SEO industry had built workflows, reporting frameworks, and client expectations around for over a decade.

The tools that relied on pulling 100 results per query to track rankings, map competitive SERP landscapes, and build keyword databases stopped working the way they were designed to work.

And the teams that had never stress-tested whether their data infrastructure could function on less information than they were accustomed to found out the answer quickly.

The practical impact varied enormously by program type:

- Teams tracking a focused set of high-priority keywords against the top 10 results barely noticed.

- Teams running enterprise-scale rank tracking across thousands of keywords, or using automated SERP scraping as the backbone of their competitive intelligence, faced a genuine operational disruption.

The difference was not sophistication. It was dependency concentration on a single data source that was always, in hindsight, provisional.

This piece explains what the change actually altered, how industry impact figures should be interpreted, what the adapted data stack now looks like, and how the shift in data affects the SEO metrics that matter.

What the num=100 Parameter Was and Why Its Removal Matters

The num parameter in a Google search URL controlled how many results appeared per page. Setting num=100 returned up to 100 results in one request.

This allowed rank tracking tools and SERP scrapers to capture the competitive landscape quickly. Instead of loading ten pages of results, they could retrieve everything in a single call.

This was the foundation of several SEO workflows. Rank tracking at depth beyond position 10. Competitive content gap analysis that required knowing who ranked 40 through 80 for a query, not just who ranked in the top 10. SERP feature mapping across the full result set. Bulk keyword difficulty estimation based on the authority profile of all 100 ranking domains.

Google said the change improves efficiency and user experience. Most users never go beyond the first results page. Returning 100 results consumed server resources with little benefit.

Now, num=100 requests return only about 10–30 results for competitive queries. For major keywords, the count can be even lower due to AI Overviews and feature-rich SERP elements.

The industry numbers reported after the change need careful interpretation. Reports of 87% impression drops and 77% lost ranking terms reflect measurement limits in tracking tools. They do not necessarily indicate real performance declines. Many rankings simply fall outside the smaller result set that tools can now access. This is a data coverage issue, not a ranking loss.

Why Many Teams Built Fragile Data Infrastructures

The num=100 removal exposed a dependency problem that predated the change. Many SEO programs had evolved to treat the ability to pull 100 results as a permanent, reliable feature of working with Google search data, rather than as an informal capability that Google could revise without notice.

This manifested in several ways.

- Many rank tracking setups monitored thousands of keywords across 100 SERP positions, creating excessive data.

- Reporting often relied on average position, a noisy metric with weak traffic insight.

- Competitive analysis tracked sites ranking 50–100, data rarely used for decisions.

The more fundamental problem is that “volume of data” and “quality of insight” are not the same thing.

Programs that were processing 100 results per keyword were not necessarily generating better strategic decisions than programs working with the top 20.

In many cases, the large data sets were producing reporting complexity that obscured the signals that actually mattered. This included which keywords were generating organic traffic, which traffic was converting, and what content changes would move those metrics.

The disruption of the num=100 removal is, in this framing, forcing a conversation about measurement philosophy that the industry needed to have regardless of Google’s technical changes.

What the Adapted Data Stack Looks Like

The programs that have moved past the initial disruption are not simply substituting one data source for another.

They are running multi-source data models that treat no single input as foundational, cross-verifying signals across tools, and prioritizing metrics that connect more directly to revenue outcomes than position numbers ever did.

| Data Source | Cost | Best For | Limitation |

| Google Search Console | Free | Actual clicks, impressions, CTR, and average position from Google’s own index | 16-month data retention; 1000-row default export limit; no competitor data |

| SERP APIs (DataForSEO, SerpApi) | Usage-based pricing | Structured, legally compliant SERP data including features, PAA, local pack | Cost per query adds up at scale; no historical depth without archiving |

| Ahrefs / SEMrush | Monthly subscription | Keyword universe, competitor keyword gaps, backlink profiles, trend data | Third-party index, not Google’s; some data lag and estimation involved |

| Rank tracking tools (STAT, Accuranker) | Monthly subscription | Position monitoring across keyword sets with SERP feature tracking | Limited to subscribed keywords; field data, not scraped Google results |

| First-party analytics (GA4, Heap) | Free to tiered | Actual user behavior, conversion paths, session data from real visitors | No keyword-level granularity without GSC integration; no competitor view |

| Manual SERP review | Staff time only | Intent analysis, content structure patterns, SERP feature observation for high-value keywords | Not scalable; limited to spot checks on priority queries |

The most important shift in the adapted stack is the elevation of Google Search Console as a primary data source rather than a supplementary one.

GSC provides actual click, impression, and CTR data from Google’s own index for the queries where your site appears. It is the only data source that reflects what Google actually did with your content, rather than what a third-party tool observed or estimated.

Programs that had been treating GSC as a reporting add-on rather than a measurement foundation are rebuilding their reporting architectures around it.

Google Search Console has limits. The default export returns 1,000 rows, and data is stored for 16 months. These constraints can restrict deeper analysis.

Connecting Search Console to BigQuery removes both limits. It allows full data exports, longer storage, and deeper segmentation than the GSC interface.

Adapting Core SEO Workflows to the New Data Environment

| Workflow Area | Before num=100 Removal | Adapted Approach |

| Rank tracking | Pull 100 positions per keyword across full SERP; track decimal-level average position | Track top 20 positions through rank tracking tools adapted to new limits; supplement with GSC average position and CTR modelling |

| Competitor analysis | Scrape full 100-result SERP for target keywords to map competitive landscape | Use SERP APIs (DataForSEO, SerpApi) for structured data; combine with Ahrefs and SEMrush keyword overlap reports |

| Keyword research | Extract all 100 results per query to identify ranking patterns and content gaps | Analyze top 20 results with deeper intent analysis; use keyword clustering by topic rather than individual term tracking |

| Reporting | Average position across hundreds of keywords as primary performance metric | Organic traffic to commercial-intent pages, CTR by keyword cluster, conversion attribution from organic; visibility share over numeric rank |

| SERP feature analysis | Manual or scraped review of all 100 results for featured snippet, PAA, and local pack presence | Structured SERP API data with feature-specific filtering; GSC search appearance segmentation for rich result types |

| Content gap analysis | Compare the client domain against all 100 results to find unranked opportunities | Topic cluster analysis using GSC query data and keyword tools; intent-layer mapping rather than position-slot comparison |

Rank Tracking: From Position Numbers to Visibility Metrics

The most practically significant workflow change is in rank tracking. Tools that depended on fetching deep SERP positions have updated their methodologies, but the more important change is in what teams choose to track and how they interpret what they see.

Tracking numeric rank position matters most in the range where position changes have meaningful CTR consequences: positions 1 through 10, and especially 1 through 3.

The difference in organic click-through rate between position 1 and position 3 is substantial and well-documented. The difference between position 42 and position 45 is essentially irrelevant to traffic or revenue outcomes.

Programs that were devoting tracking and reporting resources to deep SERP positions were optimizing for data precision in a range that has no commercial significance.

The updated approach prioritizes tracking top SERP positions that drive traffic. It also monitors SERP features such as Featured Snippets, People Also Ask, and Local Pack results. Finally, GSC impression and click data confirm whether ranking changes lead to real traffic impact.

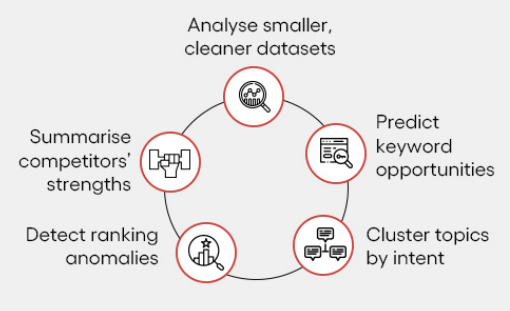

Keyword Research: Clusters Over Individual Terms

Traditional keyword research created large lists of individual keywords. Each term was then tracked across a 100-result SERP.

Today, many teams use a topic cluster approach instead. Performance is tracked at the cluster level, not for every single keyword.

The practical shift is from asking “where do we rank for this specific keyword?” to asking “are we capturing traffic across this topic area, and is the content we have serving the range of intent signals in this cluster?”

The latter question is more strategically useful and does not require deep SERP access to answer. GSC query data, combined with keyword tool estimates for the cluster, provides sufficient signal to make content investment decisions.

Topic clustering also aligns better with how modern ranking works. A well-structured hub-and-spoke content architecture around a topic builds collective authority that lifts the entire cluster, rather than optimizing individual pages for individual keywords in isolation. The data constraint is pushing the keyword strategy toward an approach that was already a better practice.

Competitive Analysis: Depth Where It Matters

Competitive SERP analysis against 100-position result sets was generating information that was rarely translated into strategic action. Knowing that a competitor ranks positions 40 through 60 for a keyword where you rank positions 1 through 5 is not actionable intelligence.

Knowing that a competitor has SERP features you do not, or that they rank for an intent cluster where you have no content, is.

The updated approach focuses on the top 20 results for priority keywords. It uses SERP API data to analyze features and content patterns. Teams also run keyword gap analysis with tools like Ahrefs and Semrush.

This helps identify topic areas where competitors have coverage, and you do not. This is also more targeted and more strategically useful than deep SERP scraping was.

Rebuilding the Reporting Framework After the Disruption

A mid-market B2B software company had built its SEO reporting around weekly rank tracking across 600 keywords, reported to leadership as average position and position change over time.

When the num=100 change caused position data to become unavailable or unreliable for keywords ranked beyond position 30, the weekly reports began showing apparent position drops across a large portion of the tracked keyword set.

The position drops triggered a leadership conversation about whether the SEO program was working. The SEO team had to explain that the changes were a measurement artifact rather than a performance decline.

This is a difficult conversation when the data you are using to prove the point is the same data being questioned.

The program rebuilt its reporting framework in six weeks. The keyword set was reduced to 120 high-priority terms tied to key topic clusters.

Organic traffic to commercial pages became the primary metric. Demo requests from organic search were tracked as the conversion metric. GSC CTR data was used as the leading indicator.

Position tracking remained for 50 priority keywords, but only as a directional signal instead of a primary KPI.

The new framework was easier to defend in leadership conversations because every metric connected to a revenue outcome. It was also less sensitive to the kind of data availability disruptions that had created the false-alarm scenario.

CTR Modelling as a Rank Estimation Approach

One of the more practically useful adaptations that emerged from the num=100 disruption is using click-through rate data from GSC to estimate approximate rank position for keywords where direct position tracking is unavailable or unreliable.

The relationship between organic CTR and rank position is well-documented.

Position 1 organic results receive roughly 25 to 30 percent of clicks for navigational and informational queries; position 3 receives 10 to 15 percent; beyond position 5, CTR drops below 5 percent for most query types.

This relationship is not precise enough for decimal-level rank tracking, but it is reliable enough to categorize keywords into meaningful rank bands: top 3, positions 4 through 10, positions 11 through 20.

Using GSC CTR data combined with impression volume to estimate rank band for a keyword set provides a workable approximation that does not require SERP scraping. For keywords with meaningful impression volume, a CTR around 20 to 30 percent suggests a top 3 position; a CTR around 5 to 10 percent suggests positions 4 through 10; a CTR under 2 percent suggests positions 11 and beyond.

This is less precise than position tracking, but sufficient for prioritization decisions about where to invest content optimization resources.

The Strategic Reframe: What This Change Is Actually Forcing

The removal of num=100 is accelerating a shift in SEO measurement philosophy that was already necessary.

Rank position as a primary KPI was always a proxy metric, useful as a leading indicator but not a business outcome in itself. The compulsion to track hundreds of keywords at deep position resolution created data volume that required significant processing time and produced reports that were rarely translated into prioritized action.

Programs that adapt well will focus on metrics that drive real business outcomes. The priority is organic traffic to pipeline-generating pages. Teams also track conversion rates from organic visitors by page type. Another key metric is cost per customer from organic vs paid channels. Finally, programs measure revenue attributed to organic search.

These metrics require a different data infrastructure than rank tracking, but they connect SEO performance to commercial outcomes in ways that rank reports never could.

There is also a useful forcing function in the data limitation itself.

When you cannot easily see positions 40 through 100, you are implicitly directed to focus resources on the keywords and content where you are competitive enough to be in the range where position changes produce traffic changes. That is usually a smaller, higher-priority set than programs were tracking before.

The SEO programs that have responded to the num=100 change by rebuilding around fewer, more commercially relevant metrics are uniformly reporting that their reporting conversations with leadership have improved.

Explaining a 0.3-point average position improvement across 600 keywords to a CMO was always difficult. Explaining that the organic pipeline contribution increased 18 percent quarter over quarter is not.

Building First-Party Data Capabilities Alongside External Tools

One of the underappreciated consequences of Google’s progressive reduction of external data access, of which num=100 is one instance, is that the organizations with strong first-party data capabilities have a compounding advantage over those that depended on third-party data access.

First-party data in an SEO context means behavioural data from your own site visitors: session recordings, heatmaps, scroll depth analysis, conversion path analysis, and cohort-level engagement metrics.

This data does not depend on Google’s access policies. It is also not affected by changes to search result pagination, and captures the actual experience of your organic traffic in a way that rank position data never did.

Combining first-party behavior data with GSC query data reveals how search traffic actually performs. It shows which queries drive visits and how users behave after landing. Teams can identify which pages and query clusters generate the most engagement and conversions. It also highlights intent mismatches that cause high bounce rates. Rank tracking alone cannot provide these insights.

Long-term SEO success requires a strong first-party data infrastructure. Set up GA4 to capture meaningful engagement events. Integrate analytics with a CRM to track an organic-driven pipeline.

Regularly analyze user behavior by traffic segment. This investment delivers the most durable, compounding returns for SEO.

Unlike third-party SERP data access, which Google can revise at any point, your own behavioural data is yours.

See What Your SEO Data Infrastructure Should Look Like Now

If your SEO reporting framework was built around rank tracking and SERP data that the num=100 change disrupted, rebuilding it correctly is a meaningful strategic opportunity. The programs that respond by reducing tracked keyword sets, elevating GSC and first-party data, and connecting organic metrics to revenue outcomes are building measurement infrastructure that will compound over time. If you want a clear view of where your current data stack has gaps and what the rebuilt version should look like, that conversation is worth having before the next disruption makes the decision for you.

→ Request an SEO measurement framework review

CEO of Nico Digital and founder of Digital Polo, Aditya Kathotia is a trailblazer in digital marketing.

He’s powered 500+ brands through transformative strategies, enabling clients worldwide to grow revenue exponentially.

Aditya’s work has been featured on Entrepreneur, Hubspot, Business.com, Clutch, and more. Join Aditya Kathotia’s orbit on Twitter or LinkedIn to gain exclusive access to his treasure trove of niche-specific marketing secrets and insights.

Categories

Steal Our SEO Playbooks

We share what’s working behind the scenes—before everyone else catches on.

• No spam • Real strategies • Early access